I've been interviewing backend engineers for the last few months, and I noticed a pattern. A painful one.

I ask a question that seems standard—almost boring—and watch as 8 out of 10 smart, capable developers walk right into a trap.

The question isn't a riddle. It's not "invert a binary tree on a whiteboard." It's a real-world problem we faced last month.

Here it is:

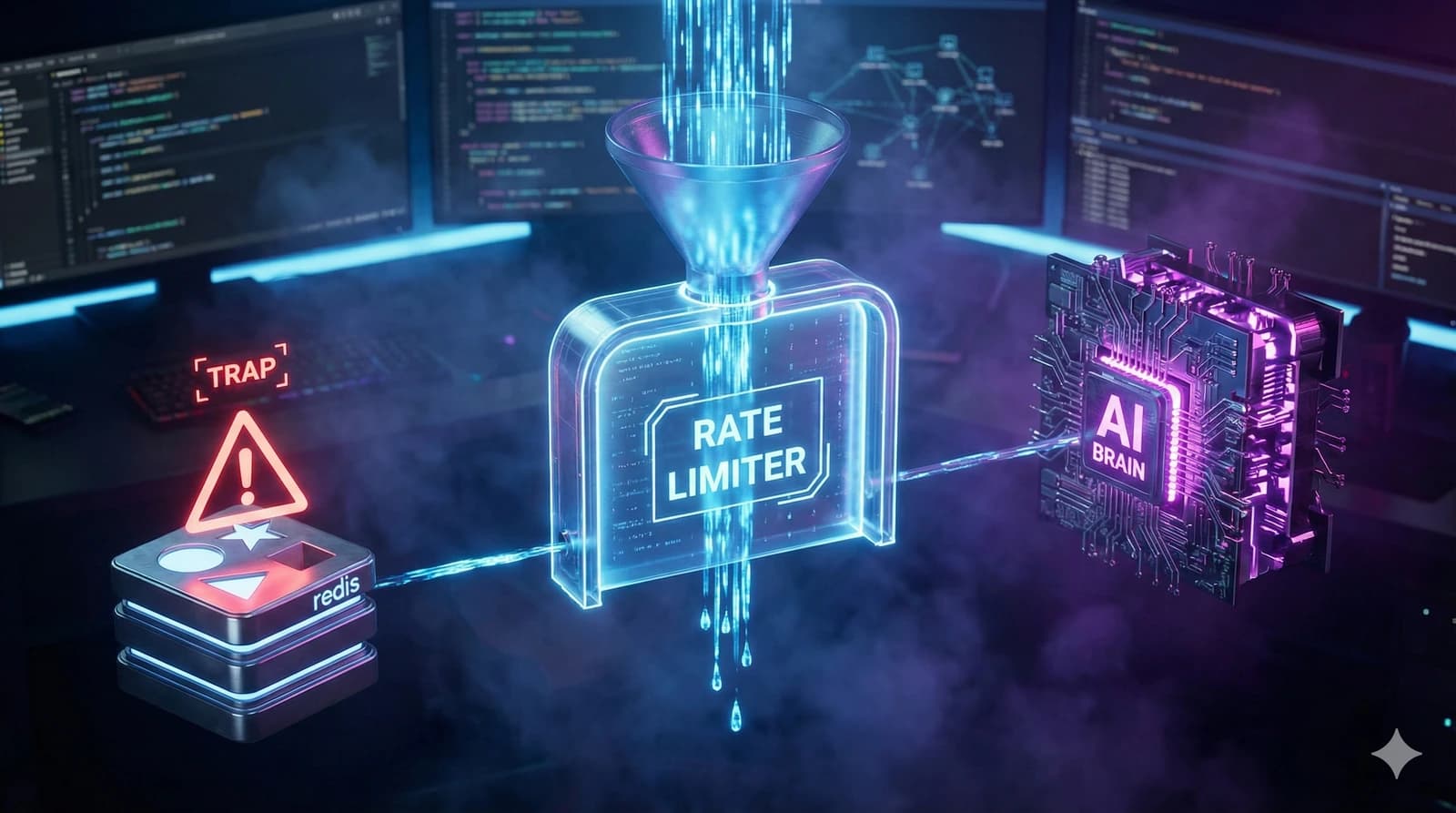

"Design a Rate Limiter for our new AI Agent API."

"Easy," they say. "I'll use Redis. Token Bucket algorithm. 100 requests per minute. Next question?"

And that's where they fail.

What AI-specific twist do interviewers add to rate limiter questions?

If this were a standard REST API where requests take 50ms, the Token Bucket answer would be perfect. But this is an AI Agent API.

Here's the context I give them next:

- Requests are slow. Generating a response can take 30-60 seconds.

- Cost is variable. One request might use 10 tokens (cheap); another might use 10,000 tokens (expensive).

- Concurrency matters. If a user sends 100 requests instantly, and each takes 60 seconds, you're holding 100 open connections. Your server memory will explode before you even hit the "rate limit."

What trap catches 80% of candidates in rate limiter design?

Most candidates optimize for throughput (requests per second). But for LLM apps, you need to optimize for concurrency (active requests) and cost (token usage).

If you just limit "10 requests per minute," a user could send 10 massive prompts simultaneously, lock up your worker threads for a full minute, and cost you $5 in a single burst.

How do you correctly design a distributed rate limiter for AI workloads?

The candidates who passed didn't just throw "Redis" at the problem. They asked about the workload.

Here's the simple, robust solution we were looking for:

1. The "Waiting Room" (Leaky Bucket)

First, we need to protect our servers from exploding. We use a Leaky Bucket queue.

- Requests enter a queue (Redis List or SQS).

- Workers pull requests at a fixed rate (e.g., 5 concurrent jobs max).

- If the queue is full, we reject immediately (429 Too Many Requests).

2. The "Wallet" (Token Bucket)

Second, we need to protect our bank account.

- We don't limit requests. We limit compute units.

- Each user has a "wallet" of points per minute.

- Before processing, we estimate the cost. If they have enough points, we proceed.

Why is rate limiter design critical for AI-era system design interviews?

The reason this question trips people up isn't because they don't know System Design. It's because they're on autopilot.

They hear "Rate Limiter" and their brain auto-completes to "Redis Token Bucket."

In 2025, the best engineers aren't the ones who memorized the "Cracking the Coding Interview" book. They're the ones who pause, look at the specific constraints of this problem, and realize that an AI Agent is a very different beast than a CRUD app.

Takeaway: Next time you're in an interview, don't rush to the solution. Fall in love with the problem first. Ask about the latency. Ask about the cost.

That's how you pass the 80% fail rate.

What does a complete rate limiter architecture look like?

Let me draw the full picture that the top 20% of candidates described:

The Architecture

┌────────────────┐

Client Request ───▶ │ API Gateway │

│ (Cloudflare) │

└───────┬────────┘

│

┌───────▼────────┐

│ Rate Limiter │

│ (Redis) │

└───────┬────────┘

│

┌─────────────┼─────────────┐

▼ ▼ ▼

┌──────────┐ ┌──────────┐ ┌──────────┐

│ Waiting │ │ Wallet │ │ Circuit │

│ Room │ │ (Token │ │ Breaker │

│ (Queue) │ │ Budget) │ │ │

└──────────┘ └──────────┘ └──────────┘Layer 1: The Waiting Room (Concurrency Limiter)

This is a Leaky Bucket or Semaphore that protects your servers from being overwhelmed.

@Component

public class ConcurrencyLimiter {

private final RSemaphore semaphore;

public ConcurrencyLimiter(RedissonClient redisson) {

this.semaphore = redisson.getSemaphore("ai-api:concurrency");

this.semaphore.trySetPermits(50); // Max 50 concurrent AI jobs

}

public <T> T executeWithLimit(Supplier<T> task) {

if (!semaphore.tryAcquire(30, TimeUnit.SECONDS)) {

throw new TooManyRequestsException("Queue full. Try again later.");

}

try {

return task.get();

} finally {

semaphore.release();

}

}

}Layer 2: The Wallet (Cost-Based Token Bucket)

Instead of counting requests, count compute units.

@Component

public class TokenBudgetLimiter {

private final RedisTemplate<String, String> redis;

public boolean tryConsume(String userId, int estimatedTokens) {

String key = "budget:" + userId;

// Each user gets 100,000 tokens per minute

Long remaining = redis.opsForValue().decrement(key, estimatedTokens);

if (remaining == null || remaining < 0) {

// Refund and reject

redis.opsForValue().increment(key, estimatedTokens);

return false;

}

return true;

}

// Reset budgets every minute via scheduled task

@Scheduled(fixedRate = 60_000)

public void resetBudgets() {

// Reset all user budgets to 100,000

}

}Layer 3: The Circuit Breaker

When the upstream AI model (OpenAI, Claude, etc.) is degraded, stop sending new requests.

This prevents cascading failures. If the AI provider's latency spikes from 30 seconds to 120 seconds, your "Waiting Room" fills up fast. The circuit breaker detects this and starts rejecting requests immediately instead of queueing them.

What other rate limiting algorithms should you know?

For completeness, here are the four algorithms you should be ready to discuss:

| Algorithm | Best For | Weakness |

|---|---|---|

| Token Bucket | Bursty traffic, API quotas | Doesn't limit concurrency |

| Leaky Bucket | Smoothing traffic, queuing | Fixed rate, can't handle bursts |

| Fixed Window | Simple rate counting | Boundary burst problem |

| Sliding Window Log | Precise rate limiting | Memory-intensive at scale |

The winning answer for AI workloads combines Leaky Bucket (concurrency) with Token Bucket (cost). Neither alone is sufficient.

References

- Stripe Engineering: Rate Limiting — Practical rate limiting strategies from Stripe

- Redis Rate Limiting Documentation — Redis-based rate limiting patterns

- Cloudflare Rate Limiting — How Cloudflare implements rate limiting at scale

- System Design Interview by Alex Xu — Chapter 4 covers rate limiter design in depth

Keep Reading

- Implementing the Outbox Pattern with CDC in Microservices — Another system design pattern that trips up candidates: reliable event delivery across services.

- How I Turned Daily Problem Solving into a DSA Habit — System design interviews test breadth, but DSA rounds test depth; here's how to stay sharp on both.

- Top Java Interview Questions for 2025 — Pair your system design prep with strong language fundamentals for a well-rounded interview performance.