I hit a StackOverflowError for the first time back in college. I was writing a recursive Fibonacci function in Java, and it worked fine for small inputs. Then I tried fib(50000) and my JVM just died. That's when I first heard about tail-call optimization, and honestly, I couldn't believe every language doesn't have it.

Tail-call optimization (TCO) is one of those features that, once you understand it, makes you wonder why it's not everywhere. Some languages have had it for decades. Others, like Java, have been debating it for just as long. Let me break down what it is, how it works, and where you can actually use it.

What is tail-call optimization and how does it work?

Tail-call optimization is a compiler technique that transforms recursive functions so they reuse the current stack frame instead of creating new ones. The result? You can recurse to any depth without ever running out of stack space.

To understand why this matters, you need to understand what happens when a function calls itself.

The stack frame problem

Every time you call a function, the runtime pushes a new frame onto the call stack. That frame holds the function's local variables, parameters, and return address. When the function returns, its frame gets popped off.

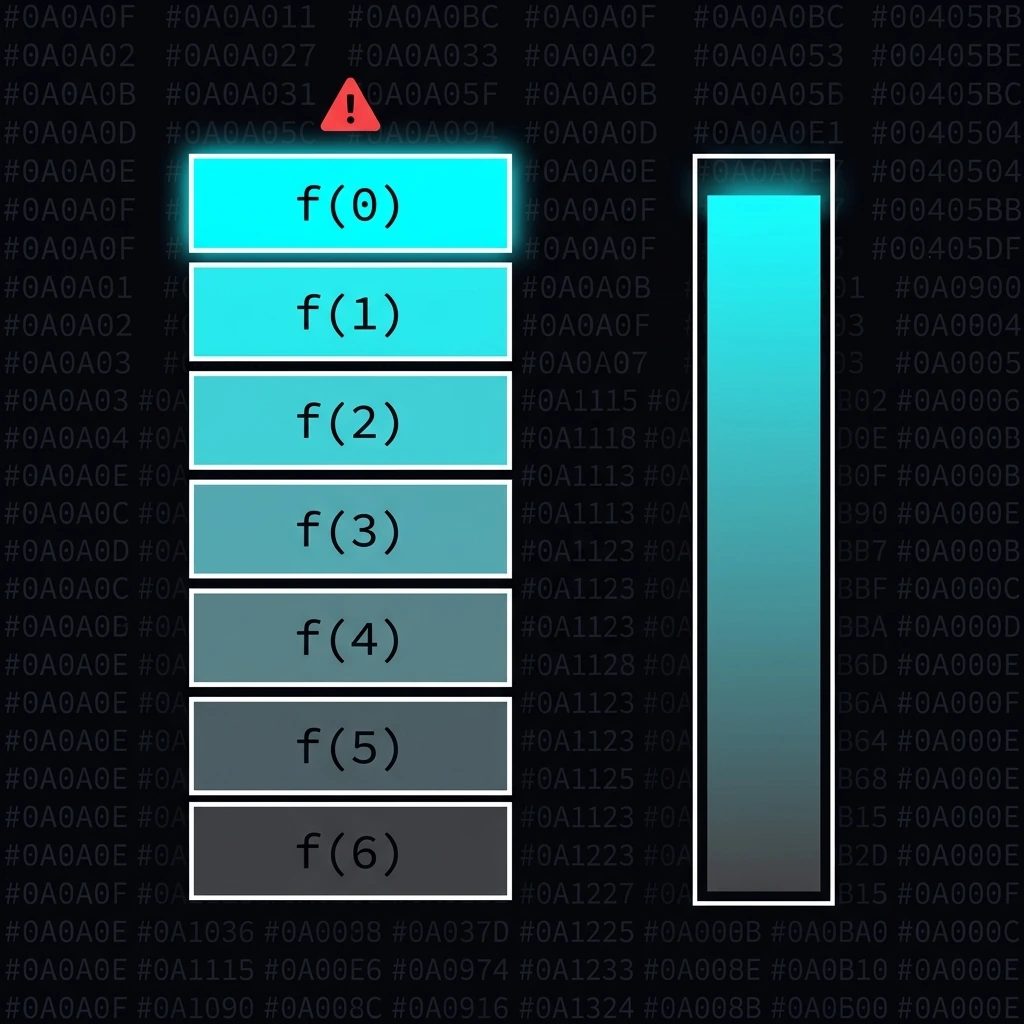

With recursion, each recursive call adds another frame. If you're calculating factorial(10000), that's 10,000 stack frames sitting in memory at once. Most runtimes allocate between 512KB and 1MB for the stack. Go deep enough, and you're done.

Here's a classic factorial in Clojure without TCO:

(defn factorial [n]

(if (zero? n)

1N

(* n (factorial (dec n)))))Call (factorial 6) and watch the stack grow:

(factorial 6)

(* 6 (factorial 5))

(* 6 (* 5 (factorial 4)))

(* 6 (* 5 (* 4 (factorial 3))))

(* 6 (* 5 (* 4 (* 3 (factorial 2)))))

(* 6 (* 5 (* 4 (* 3 (* 2 (factorial 1))))))

(* 6 (* 5 (* 4 (* 3 (* 2 (* 1 (factorial 0)))))))

That's 7 frames stacked up before a single multiplication happens. Each frame has to wait for the one below it to return. For factorial(100000), you'd need 100,000 frames. Your stack won't survive that.

How TCO fixes this

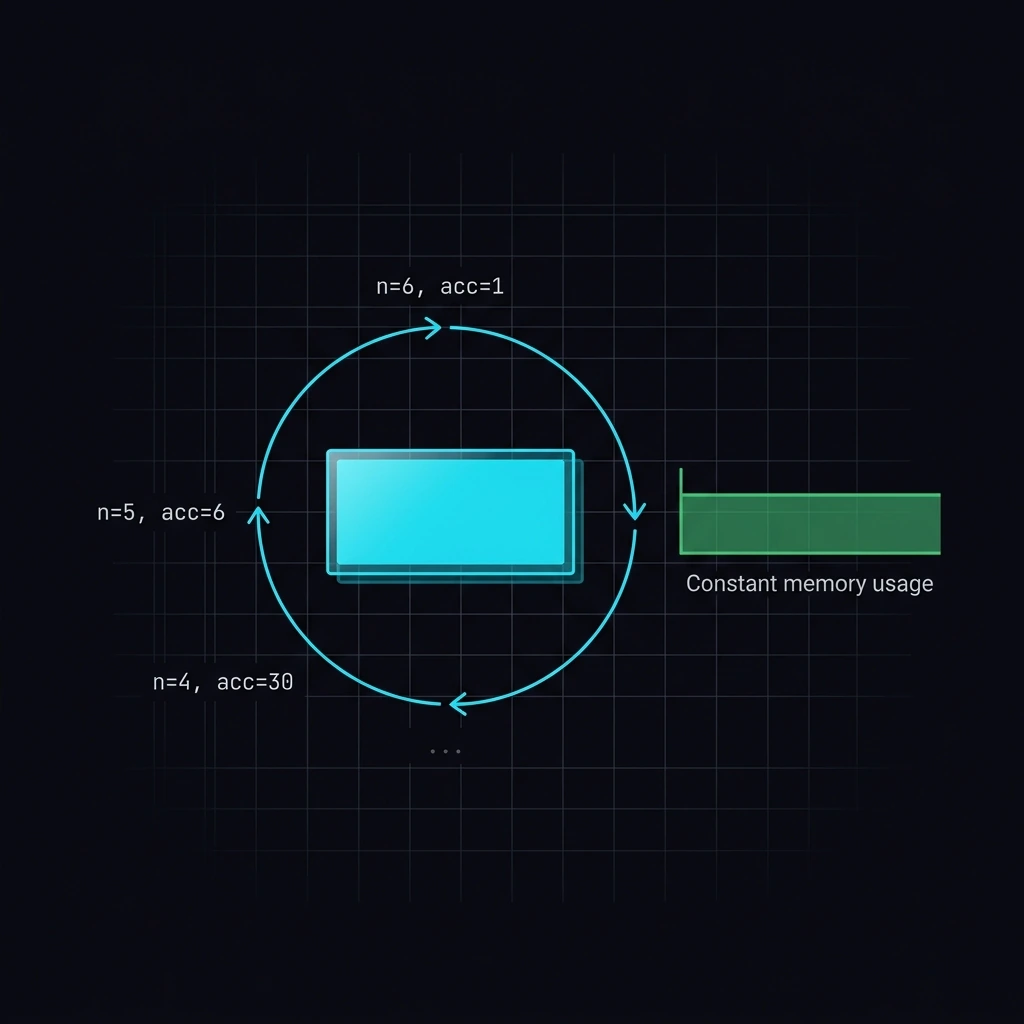

The trick is restructuring the recursion so the recursive call is the last thing the function does. No multiplication after it. No further computation. Just the recursive call, and nothing else.

Here's the tail-recursive version in Clojure:

(defn factorial

([n] (factorial n 1N))

([n acc]

(if (zero? n)

acc

(recur (dec n) (* acc n)))))The key difference: instead of waiting for the recursive call to return and then multiplying, we pass the running total as an accumulator parameter. The multiplication happens before the recursive call, not after.

Now the stack looks completely different:

(factorial 6 1)

(factorial 5 6) ;; reused frame

(factorial 4 30) ;; reused frame

(factorial 3 120) ;; reused frame

(factorial 2 360) ;; reused frame

(factorial 1 720) ;; reused frame

(factorial 0 720) ;; reused frame

=> 720

One frame. Reused every time. The compiler sees that nothing happens after the recursive call, so it just jumps back to the top of the function with new arguments. It's basically a while loop under the hood.

Which languages actually support tail-call optimization?

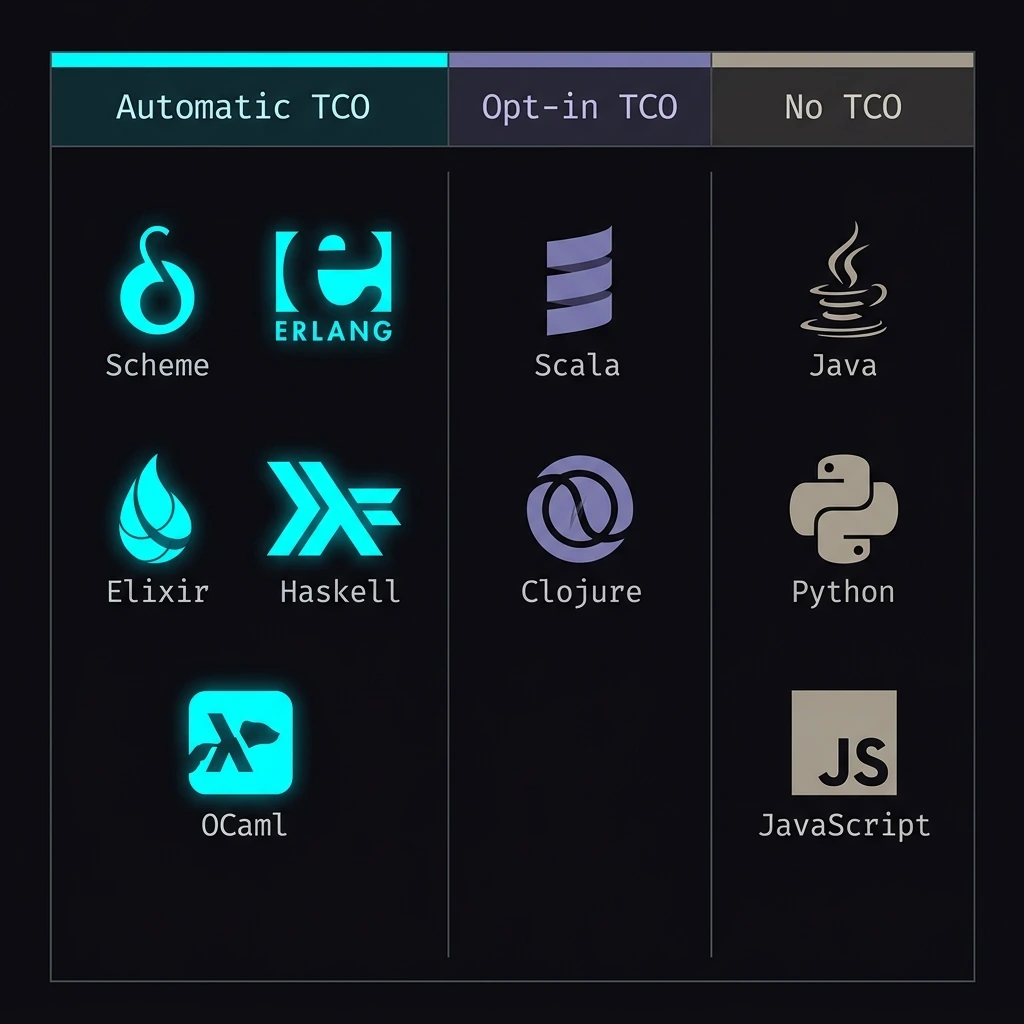

Not all languages treat TCO the same way. Some do it automatically, some give you tools to opt in, and some just... don't.

Languages with automatic TCO

These languages optimize tail calls without you having to ask:

Scheme and Racket were built around TCO. The Scheme specification (R5RS and later) actually requires implementations to support proper tail calls. It's not optional. This is why Scheme is the go-to language for teaching recursion in computer science programs.

(define (factorial n acc)

(if (= n 0)

acc

(factorial (- n 1) (* acc n))))

(factorial 1000000 1) ;; works fine, no stack overflowErlang and Elixir also do TCO automatically. In the BEAM VM (which powers both), tail-recursive functions are the standard way to write loops. There are no for or while loops in Elixir. Everything is recursion, and TCO makes it work.

defmodule Math do

def factorial(0, acc), do: acc

def factorial(n, acc), do: factorial(n - 1, n * acc)

end

Math.factorial(1_000_000, 1) # runs in constant stack spaceHaskell supports TCO, but it gets tricky because of lazy evaluation. A tail call in Haskell might build up unevaluated thunks (delayed computations) instead of actual values. You sometimes need to force strict evaluation with seq or bang patterns to get the full benefit.

OCaml handles TCO cleanly. The compiler turns tail-recursive functions into loops automatically. OCaml's pattern matching and functional style make tail recursion feel natural.

Languages with opt-in TCO

Scala gives you the @tailrec annotation. It doesn't enable TCO. It tells the compiler to verify that your function is actually tail-recursive and throw a compile error if it's not. The Scala compiler already optimizes tail calls when it can, but @tailrec gives you a guarantee.

import scala.annotation.tailrec

@tailrec

def factorial(n: BigInt, acc: BigInt = 1): BigInt = {

if (n <= 1) acc

else factorial(n - 1, n * acc)

}

factorial(100000) // works perfectlyIf you accidentally write non-tail-recursive code with @tailrec, the compiler tells you:

error: could not optimize @tailrec annotated method factorial:

it contains a recursive call not in tail position

That's really helpful. You catch the problem at compile time instead of discovering it in production when your app crashes.

Clojure takes a different approach with recur. The JVM doesn't support TCO natively, so Clojure can't just optimize tail calls transparently. Instead, recur explicitly tells the compiler "jump back to the top of this function." If you try to use recur in a non-tail position, you get a compile error.

(defn factorial [n]

(loop [i n acc 1N]

(if (zero? i)

acc

(recur (dec i) (* acc i)))))Rich Hickey (Clojure's creator) made this a conscious design choice. He wanted developers to know when they're relying on tail-call behavior rather than assuming the compiler will handle it.

Languages that don't support TCO

Java is the big one. TCO has been discussed in the Java community for over 20 years. There was even a Project Loom mailing list discussion about it. But it keeps getting deprioritized.

Why? A few reasons:

-

Stack traces are sacred in Java. TCO eliminates intermediate stack frames. That means your stack trace in an exception won't show every call. For a language where developers rely heavily on stack traces for debugging, that's a real problem.

-

Security model depends on stack inspection. Java's

SecurityManager(deprecated but still influential) walks the call stack to check permissions. TCO would break that. -

The JVM wasn't designed for it. Adding TCO to the JVM would require changes to the bytecode verifier and the runtime. It's not impossible, but it's a significant engineering effort for a feature that has workarounds.

-

Iterative style is idiomatic. Java developers already use

forandwhileloops. The demand for TCO is lower than in functional languages where recursion is the primary looping mechanism.

JavaScript is an interesting case. The ES2015 (ES6) spec includes proper tail calls. It's in the specification. But only Safari/WebKit actually implements it. V8 (Chrome, Node.js) and SpiderMonkey (Firefox) both implemented it experimentally and then removed it. The V8 team argued it made debugging harder and proposed an alternative called "syntactic tail calls" that would require an explicit keyword. That proposal stalled, and here we are.

Python doesn't support TCO either. Guido van Rossum wrote a blog post explaining why: he believes stack traces are more valuable than tail-call optimization, and Python's default recursion limit of 1,000 is intentional.

How can you work around the lack of TCO?

If you're stuck in a language without TCO, you have options.

Convert to iteration

The simplest approach. Any tail-recursive function can be mechanically converted to a loop:

public static BigInteger factorial(int n) {

BigInteger acc = BigInteger.ONE;

for (int i = n; i > 0; i--) {

acc = acc.multiply(BigInteger.valueOf(i));

}

return acc;

}It's not as elegant as recursion, but it works and it's what most Java developers do.

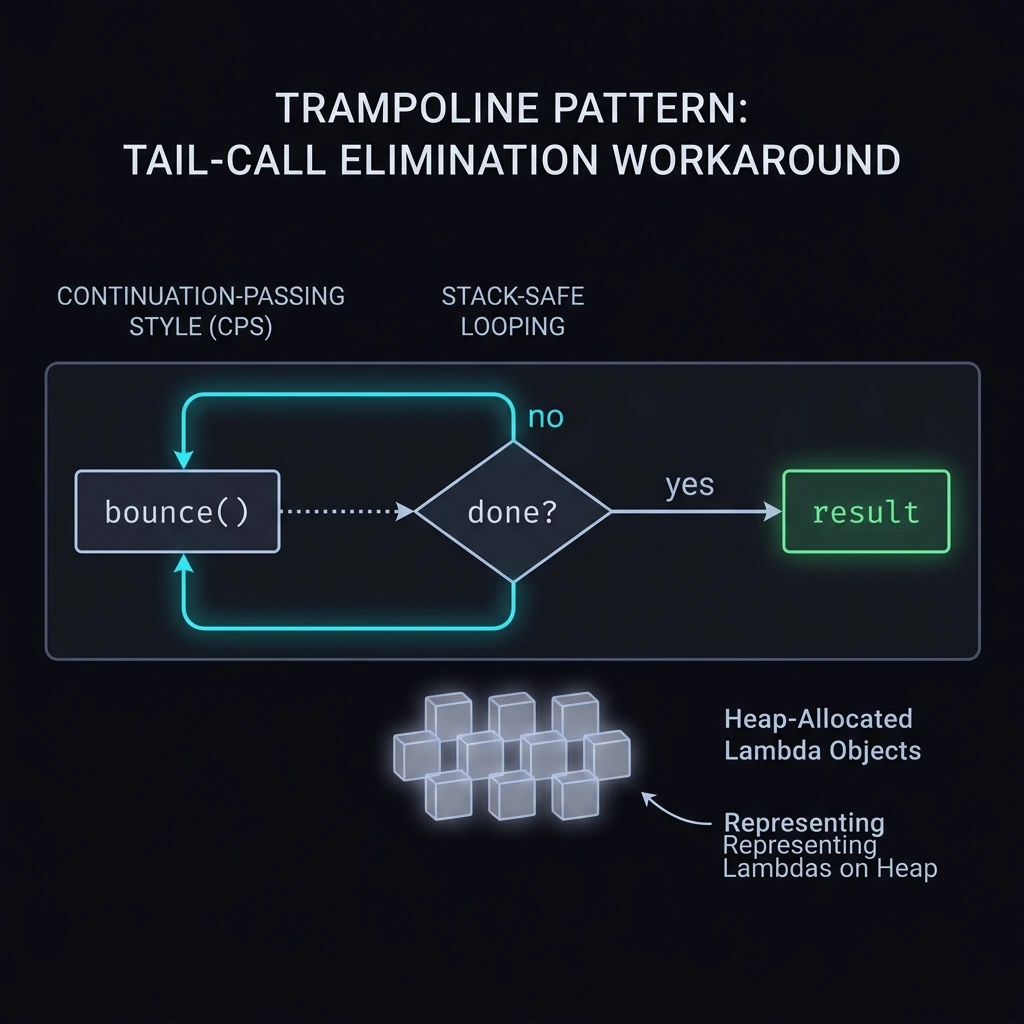

The trampoline pattern

A trampoline is a loop that repeatedly calls a function until it produces a result instead of another function call. It simulates TCO by moving the recursion from the call stack to the heap.

@FunctionalInterface

interface Trampoline<T> {

Trampoline<T> bounce();

default boolean isDone() { return false; }

default T result() { throw new UnsupportedOperationException(); }

static <T> Trampoline<T> done(T value) {

return new Trampoline<T>() {

public Trampoline<T> bounce() { return this; }

public boolean isDone() { return true; }

public T result() { return value; }

};

}

static <T> T execute(Trampoline<T> trampoline) {

while (!trampoline.isDone()) {

trampoline = trampoline.bounce();

}

return trampoline.result();

}

}public static Trampoline<BigInteger> factorial(int n, BigInteger acc) {

if (n <= 1) return Trampoline.done(acc);

return () -> factorial(n - 1, acc.multiply(BigInteger.valueOf(n)));

}

// Usage

BigInteger result = Trampoline.execute(factorial(100000, BigInteger.ONE));The trampoline works because each step returns a lambda (stored on the heap) instead of making a recursive call (stored on the stack). The heap is much bigger than the stack, so you can handle very deep recursion.

It's clever, but it adds overhead from all the lambda allocations and the indirection. For most Java code, just use a loop.

When does tail-call optimization actually matter in practice?

TCO isn't just an academic exercise. There are real scenarios where it makes a difference.

Tree and graph traversal

Walking deeply nested data structures (think ASTs, file system trees, or deeply nested JSON) can blow the stack without TCO. Functional languages handle this naturally with tail-recursive traversals using continuation-passing style.

State machines and protocol handlers

In Erlang/Elixir, processes are often implemented as tail-recursive functions that handle messages in a loop. The GenServer behavior in Elixir is built on this pattern. Each state transition is a tail call, and the process runs forever without growing the stack.

defmodule Counter do

def loop(count) do

receive do

:increment -> loop(count + 1)

{:get, caller} ->

send(caller, count)

loop(count)

end

end

endCompiler and interpreter implementations

If you're writing a programming language that supports recursion (and most do), your interpreter needs to handle deep recursion without itself overflowing. TCO in the host language makes this straightforward.

Stream processing

Processing large sequences element by element (like a log file with millions of lines) maps naturally to recursive patterns. TCO means you can write these as elegant recursive functions instead of imperative loops.

What's the real takeaway here?

Tail-call optimization is one of those features that separates languages designed for recursion from languages that merely tolerate it. Scheme, Elixir, and Haskell treat recursion as a first-class control flow mechanism. Java, Python, and (in practice) JavaScript treat it as a nice-to-have that shouldn't come at the expense of stack traces and debugging.

I don't think Java will ever get TCO. And honestly, with Virtual Threads and the continued push toward imperative patterns in Java, the demand just isn't there. But if you ever work in Scala, Clojure, or Elixir, understanding TCO isn't optional. It's how you write loops.

The next time you hit a StackOverflowError, don't just bump the stack size with -Xss. Ask yourself: can I restructure this as a tail call? And if your language doesn't support TCO, at least you know why, and what your options are.

For more on tail-call optimization, see the Scheme R7RS specification which mandates proper tail calls, Guido van Rossum's post on why Python doesn't support TCO, and the Scala @tailrec documentation.

Keep Reading

- Mastering Virtual Threads in Java 25 — If Java won't give us TCO, at least it gave us Virtual Threads for concurrency.

- How I Turned Daily Problem Solving into a DSA Habit — Recursion practice is a big part of building strong DSA fundamentals.

- Java Interview Questions 2025 — TCO and recursion concepts come up in advanced Java interviews.