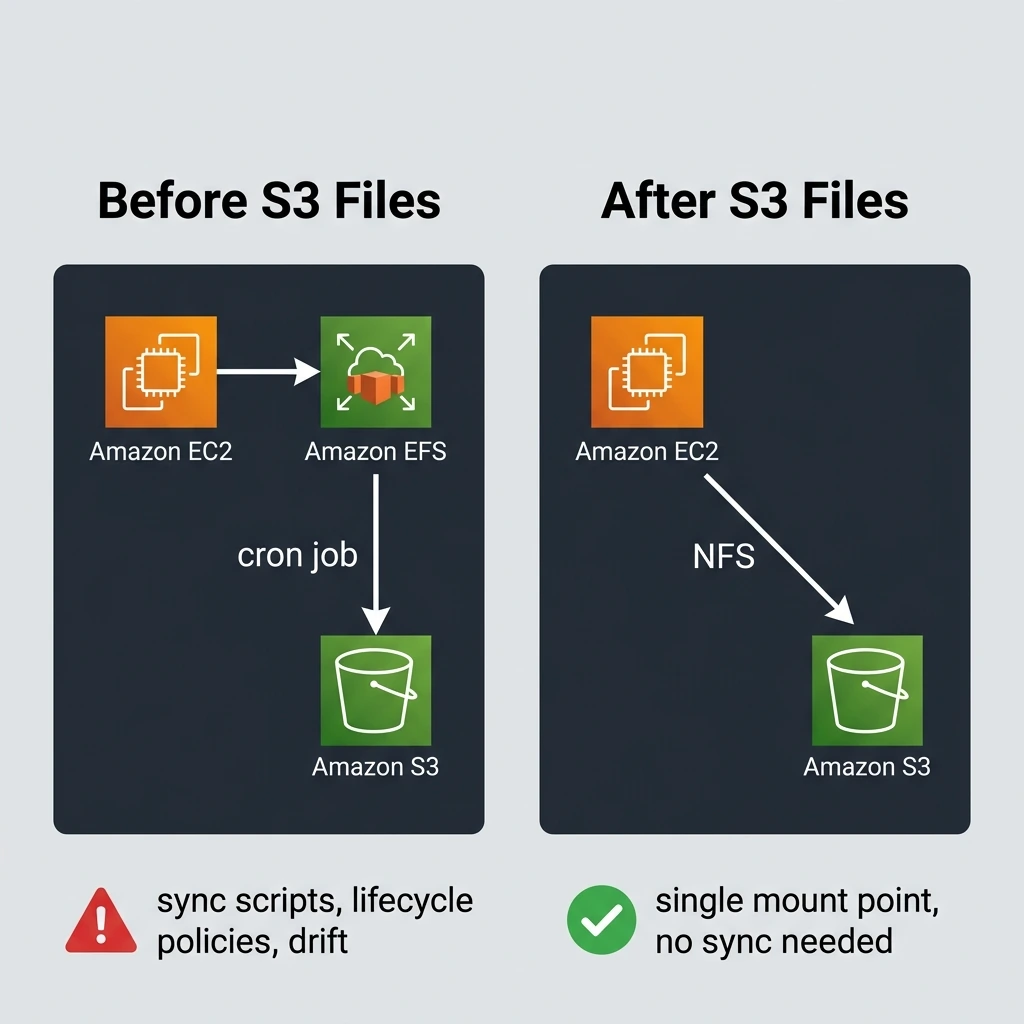

I've been running EFS-to-S3 sync jobs for two years. Cron schedules, lifecycle policies, rsync scripts that break every time someone changes a directory structure. All because S3 couldn't speak file system.

That changed this week.

On April 7, 2026, AWS announced Amazon S3 Files, a feature that lets you mount any S3 bucket as a shared NFS file system. No gateway. No third-party tool. No data copies. Your applications read and write files, and S3 stores them. That's it.

And quietly, on April 6, AWS also started disabling SSE-C encryption by default on all new S3 buckets. If you're managing encryption keys manually, that one needs your attention too.

Let me walk through both changes.

What is Amazon S3 Files and how does it work?

Amazon S3 Files gives S3 buckets a fully-featured file system interface using NFS v4.2. You can mount a bucket on EC2, Lambda, EKS, ECS, Fargate, or AWS Batch and interact with your data using standard file operations: open(), read(), write(), ls, cp, mv. No SDK. No API calls. Just a mount point.

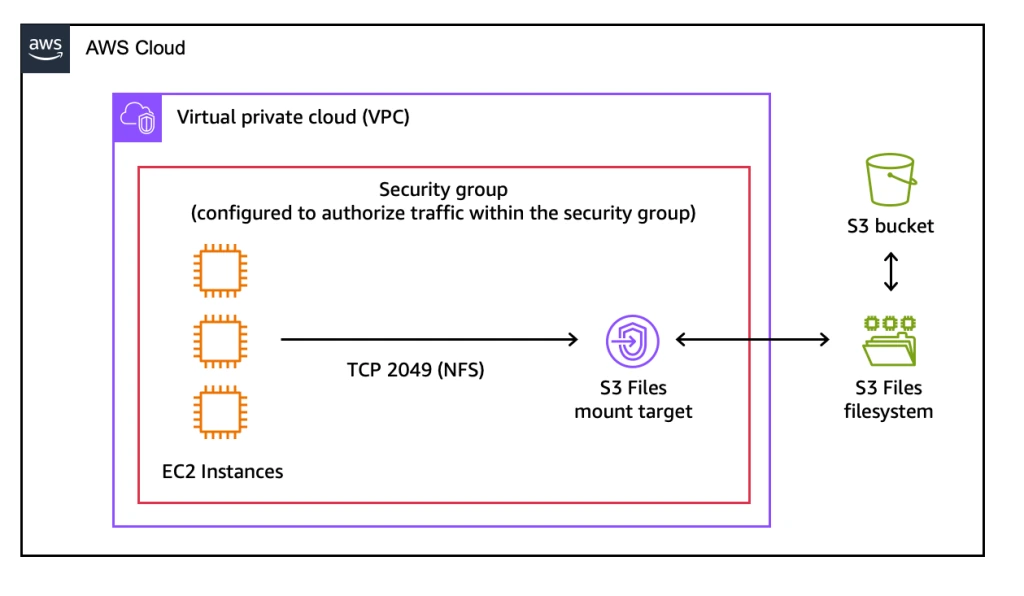

Architecture overview of Amazon S3 Files. Source: AWS News Blog

Architecture overview of Amazon S3 Files. Source: AWS News Blog

S3 is now the first and only cloud object store that provides native file system access while keeping all data in object storage. Your objects don't move to a separate file system. They stay in S3 with all the durability, lifecycle policies, and access controls you already have.

S3 Files is generally available in 34 AWS Regions as of launch day.

The stage and commit model

This is the part that surprised me. Instead of translating every file write into an immediate S3 PUT, S3 Files batches changes and commits them to S3 roughly every 60 seconds. AWS borrowed this concept from version control.

Here's what that means in practice:

- You write a file through the NFS mount

- The write lands in a caching layer (built on EFS infrastructure)

- S3 Files aggregates writes within a 60-second window

- Multiple writes to the same file become a single S3 PUT

- The committed object appears in S3 with full consistency

This batching has two practical benefits. First, it cuts your S3 request costs because ten rapid writes to the same file become one PUT, not ten. Second, if you're using S3 versioning, you don't end up with ten versions of a file that changed in under a minute.

But it also means there's a ~60-second lag before file changes are visible on the S3 side. If your workflow needs immediate S3 API visibility of written data, you need to account for that delay.

When there's a conflict (say, someone writes to a file through NFS while another process updates the same object via the S3 API), S3 remains authoritative. The file-side version gets moved to a lost+found directory with metrics for visibility. No silent data loss.

The caching layer under the hood

When you create an S3 Files file system, AWS provisions a caching layer backed by EFS infrastructure. This cache holds three things:

- Recently read files: Hot reads come from cache with sub-millisecond latency

- Recently written files: Staged writes waiting for the next commit cycle

- Metadata: Directory listings, file attributes, timestamps

Small file reads are served entirely from the cache. Large file reads (over 1MB) stream directly from S3 and don't incur S3 Files charges. The aggregate read throughput can reach multiple terabytes per second.

This design is what makes S3 Files cost-effective. You pay file system rates ($0.30/GB-month) only on the data that's actively cached. A petabyte bucket where only 500GB is actively read? You're paying S3 rates for the petabyte and file system rates for the 500GB.

How do you set up S3 Files on an EC2 instance?

The setup is straightforward. Here's the full flow:

# Step 1: Create an S3 Files file system linked to your bucket

aws s3api create-file-system \

--bucket my-data-bucket \

--file-system-name my-fs

# Step 2: Get the mount target DNS name

aws s3api describe-file-system \

--bucket my-data-bucket \

--file-system-name my-fs \

--query 'FileSystem.MountTargets[0].DnsName' \

--output text

# Step 3: Mount on your EC2 instance

sudo mount -t nfs4 \

-o nfsvers=4.2,rsize=1048576,wsize=1048576 \

fs-mount-target.efs.us-east-1.amazonaws.com:/ \

/mnt/s3data

# Step 4: Use it like any filesystem

ls /mnt/s3data

cp local-file.csv /mnt/s3data/uploads/

cat /mnt/s3data/reports/quarterly.jsonOnce mounted, every application on that instance can access S3 data through normal file I/O. Python scripts, Java apps, shell scripts, legacy C++ binaries. Nothing needs to know it's talking to S3.

For persistent mounts across reboots, add it to /etc/fstab:

# /etc/fstab entry for S3 Files

fs-mount-target.efs.us-east-1.amazonaws.com:/ /mnt/s3data nfs4 nfsvers=4.2,rsize=1048576,wsize=1048576,_netdev 0 0Where does S3 Files beat EFS (and where does it not)?

This is the question I've been testing all week. S3 Files isn't a drop-in EFS replacement for every workload, but for specific patterns it's clearly better.

S3 Files wins

| Scenario | Why S3 Files |

|---|---|

| Large datasets, small active working set | Pay S3 rates on cold data, file system rates only on hot data |

| Legacy app migration to S3 | Zero code changes needed, just mount and go |

| AI/ML training data pipelines | Read training data as files, store as S3 objects |

| Agentic AI workloads | Shared workspace across multiple compute instances |

| Multi-service data sharing | Multiple EKS pods or Lambda functions reading the same dataset |

EFS still wins

| Scenario | Why EFS |

|---|---|

| All data is hot, constant read/write | EFS avoids the commit delay and S3 request overhead |

| Sub-second write visibility needed | S3 Files has a ~60-second commit lag |

| Windows workloads (SMB) | S3 Files only supports NFS, no SMB |

| Hard link requirements | S3 Files doesn't support hard links |

| Bucket exceeds 50 million objects | AWS warns about performance at this scale |

Pricing comparison

The pricing math depends entirely on your access pattern:

- S3 Files cached storage: $0.30/GB-month (only for actively cached data)

- S3 Files reads (small files): $0.03/GB from cache

- S3 Files reads (large files, 1MB+): $0 from S3 Files (standard S3 GET charges apply)

- S3 Files writes: $0.06/GB

Compare that to EFS Performance-optimized at $0.30/GB for standard storage and $0.03/GB for reads. The difference shows up at scale: if you have 10TB in a bucket but only touch 200GB regularly, S3 Files costs a fraction of what an equivalent EFS setup would cost.

What are the gotchas you should know before adopting S3 Files?

I ran into a few things during my initial testing that are worth flagging.

The 60-second commit window is real. If you write a file via NFS and immediately try to read it through the S3 API (using aws s3 cp or a direct GET), it won't be there yet. Your application logic needs to handle this. For workflows that do writes via NFS and reads via S3 API, consider adding a short wait or checking for object existence.

NFS file locks don't protect against S3 API access. If you lock a file through NFS, that lock only applies to other NFS clients. Someone using the S3 API directly can still modify the object. This isn't a bug. It's how the boundary between file system and object store works. But it can bite you if mixed access isn't on your radar.

The 50-million object warning is something to watch. AWS recommends caution when a mounted bucket contains more than 50 million objects. Directory listings and metadata operations can slow down at that scale. If you're dealing with buckets that large, consider using S3 prefixes to scope your mount.

No pNFS, Kerberos, or nconnect support. If your NFS setup depends on parallel NFS, Kerberos authentication, NFSv4 data retention, or the nconnect mount option, those aren't available yet at GA. Standard NFS v4.2 features work fine.

SMB is not supported. Windows workloads that need file system access to S3 still need FSx or a gateway solution.

Why did AWS disable SSE-C encryption by default?

This change flew under the radar next to S3 Files, but it affects every new bucket created after April 6, 2026.

SSE-C (Server-Side Encryption with Customer-Provided Keys) lets you bring your own encryption key on every PUT and GET request. S3 encrypts and decrypts using your key but never stores it. The idea is maximum control. The reality is operational risk. Lose the key, lose the data forever. AWS can't recover it for you. There's no "forgot my password" option.

AWS KMS solved this years ago with customer-managed keys (CMKs) that give you full ownership and control, plus key rotation, auditing through CloudTrail, and recovery options. For most workloads, KMS does everything SSE-C does, minus the footgun.

So AWS made SSE-C opt-in instead of opt-out. Here's how the rollout works:

What changes for new buckets

Every new general-purpose S3 bucket created after April 6, 2026 has SSE-C disabled by default. If you try to upload an object with SSE-C headers, you'll get an access denied error unless you explicitly enable SSE-C first.

To enable SSE-C on a new bucket:

# Explicitly allow SSE-C on a new bucket

aws s3api put-bucket-encryption \

--bucket my-new-bucket \

--server-side-encryption-configuration '{

"Rules": [{

"ApplyServerSideEncryptionByDefault": {

"SSEAlgorithm": "AES256"

},

"BucketKeyEnabled": false

}],

"AllowSSEC": true

}'What changes for existing buckets

This is the part that might catch people off guard. AWS is also disabling SSE-C on existing buckets that have zero SSE-C encrypted objects. If you created a bucket, never used SSE-C, but your automation code includes SSE-C headers "just in case," those writes will start failing.

Existing buckets that actually contain SSE-C objects? No changes. AWS won't touch those.

Who this affects

If you're using AWS KMS (SSE-KMS) or S3-managed keys (SSE-S3) for encryption, this change does nothing to you. Your buckets already don't use SSE-C.

If you're one of the teams still on SSE-C, you'll want to:

- Audit which buckets actually use SSE-C (

aws s3api get-bucket-encryption) - Plan a migration to KMS for buckets that don't strictly need SSE-C

- Explicitly re-enable SSE-C on new buckets where it's genuinely required

The rollout covers 37 AWS Regions, including GovCloud and China regions, and will complete over the next few weeks.

What do these changes tell us about where S3 is heading?

If I look at S3 Files and the SSE-C default together, they tell the same story: AWS is reducing the reasons you'd reach for anything other than S3.

Need file system access? You used to need EFS plus sync scripts. Now you mount S3 directly. Need encryption with your own keys? You used to reach for SSE-C. Now AWS is steering you toward KMS, which handles key management for you.

S3 now stores over 500 trillion objects and handles roughly 200 million requests per second. It turned 20 years old last month. And instead of letting it coast, AWS gave it the most significant capability upgrade since S3 Intelligent-Tiering launched in 2018.

For my own projects, I'm already replacing two EFS-backed data pipelines with S3 Files mounts. The sync cron jobs are gone. The drift alerts are gone. One mount point, one storage bill, and a 60-second commit window I can easily live with.

If you've been running parallel storage systems just to get file access to your S3 data, this week is a good week to rethink that architecture.

For the full details, see the official S3 Files announcement, the S3 Files product page, and the SSE-C security default announcement.

Keep Reading

- AI-Driven Anomaly Detection for Security - How cloud infrastructure and AI work together for real-time threat detection.

- Implementing the Outbox Pattern with CDC in Microservices - Storage design patterns that affect reliability in distributed systems.

- The Day a React Patch Broke the Internet - Another deep dive into an infrastructure event that caught everyone off guard.