If you tried to open X (Twitter), Canva, or Discord on December 5th, you likely saw a 500 error. The internet didn't just blink; it stumbled hard.

The irony? The outage wasn't caused by a massive DDoS attack or a hacker group. It was caused by the defense against one.

As engineers, we often talk about "Blast Radius" and "Canary Deployments." The recent Cloudflare incident is a masterclass in how fragile global infrastructure can be—and why a single line of Lua code can take down 28% of the world's HTTP traffic.

Here is the technical breakdown of the React2Shell vulnerability and the Cloudflare patch that went wrong.

What was the React2Shell vulnerability (CVE-2025-55182)?

Before we get to the outage, we need to understand the panic. On December 3, 2025, a critical vulnerability (CVSS 10.0) was disclosed in React Server Components (RSC).

- The Vulnerability: Dubbed "React2Shell," it affects React 19 and Next.js (versions 15.x and 16.x).

- The Exploit: It allows unauthenticated Remote Code Execution (RCE). An attacker can send a specially crafted HTTP request to a server using the "Flight" protocol (used by RSC) and execute arbitrary code.

- The Threat: Because this exploits the deserialization of data on the server, standard firewalls often miss it. It looks like legitimate traffic until it hits the React server logic.

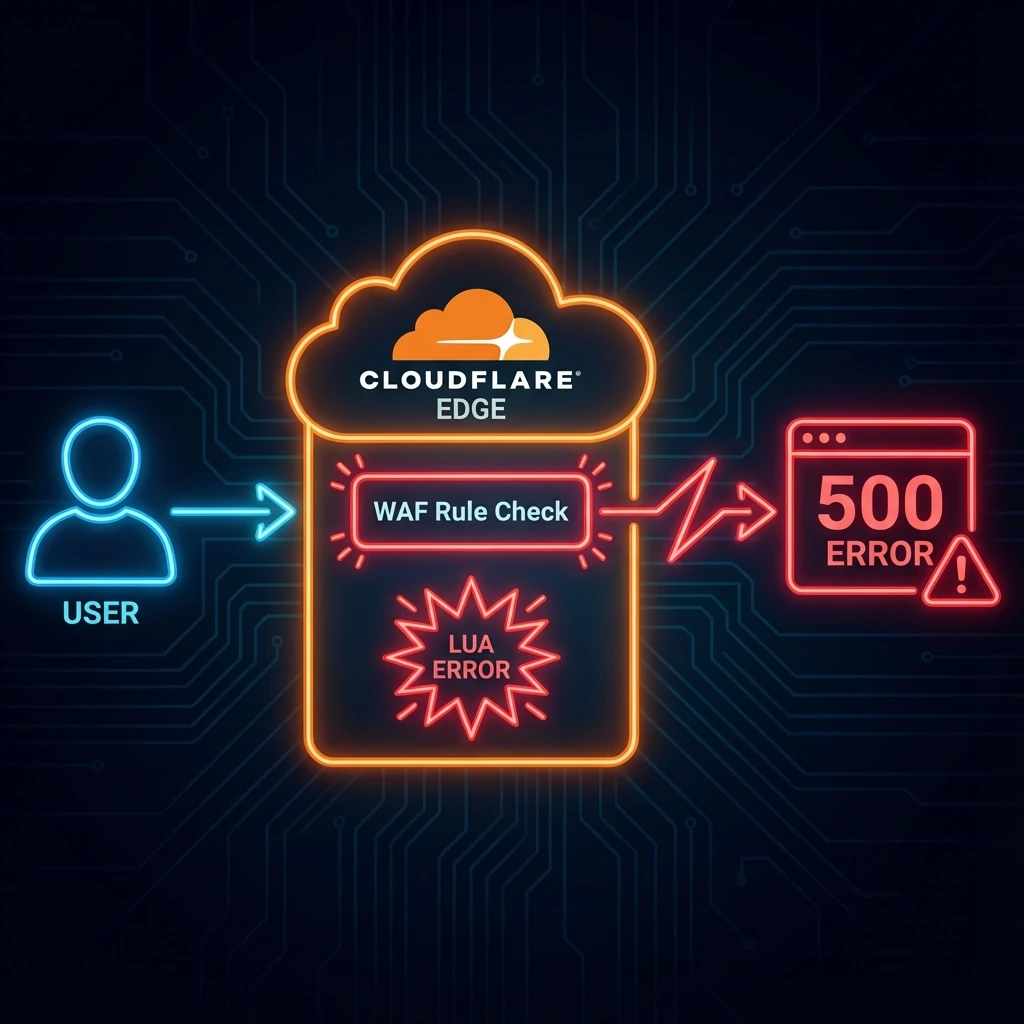

Cloudflare, acting as the shield for millions of websites, decided to roll out a global WAF (Web Application Firewall) rule to block these malicious payloads before they could reach customer servers.

How did Cloudflare's emergency patch make the outage worse?

Timeline: December 5, 2025, around 08:47 UTC.

Cloudflare engineers needed to inspect the bodies of incoming requests to detect the React2Shell exploit pattern. To do this effectively for Next.js applications, they needed to increase the WAF's request body buffer size to 1MB (matching the Next.js default).

Here is the sequence of events that led to disaster:

- The Config Change: They deployed a change to increase the buffer size to 1MB.

- The Conflict: They realized an internal WAF testing tool didn't support this larger buffer size. Since the tool wasn't critical for customer traffic, they deployed a second change to disable the test tool.

- The Lurking Bug: This is where it gets technical. Disabling that tool triggered a dormant bug in their request routing logic (written in Lua).

- The Crash: The code attempted to access a field called

executeon a module that was nownil(null) because the test tool was disabled.

What Lua exception caused 28% of global traffic to drop?

According to Cloudflare's post-mortem, the specific error was a nil pointer exception in their NGINX-based proxy (FL1):

Failed to run module rulesets callback late_routing:

/usr/local/nginx-fl/lua/modules/init.lua:314:

attempt to index field 'execute' (a nil value)Translation for Java/JS Devs:

Imagine you have a try-catch block, but the logic inside the try block assumes a service is always instantiated. When they toggled the "test tool" off, the service became null. The code tried to run service.execute(), threw an unhandled exception, and returned a HTTP 500 to the user.

Because this logic sits at the "edge" (the very first point of contact for traffic), the request died instantly. It didn't matter if your backend was up; Cloudflare couldn't route the request to you.

How much of the internet went down and for how long?

For about 25 minutes, chaos ensued.

- 28% of global HTTP traffic served by Cloudflare returned 500 errors.

- Impacted Services: X (Twitter), LinkedIn, Canva, Discord, and thousands of Next.js apps hosted on Vercel (which uses Cloudflare under the hood).

- Resolution: Cloudflare identified the Lua error and reverted the change by 09:12 UTC.

What should every developer learn from the React2Shell incident?

1. The "Null Pointer" is Still the Billion Dollar Mistake

It doesn't matter if it's Java, JavaScript, or Lua. Unchecked null/nil references are the #1 cause of sudden death in production. In TypeScript/Next.js, this is why we use Optional Chaining (?.) excessively.

- Takeaway: Never assume a config object or service exists just because it did yesterday.

2. Test Your "Kill Switches" Cloudflare broke because they turned off a testing tool. We often test our features, but we rarely test the removal of a feature.

- Takeaway: If you have a feature flag to disable a module, test what happens when that flag is actually set to

falsein a staging environment first.

3. Infrastructure as Code (IaC) is Scary We are moving toward a world where a single config file controls the security of the entire internet.

- Takeaway: If you are building the "Visual Docker Compose" tool (like I am currently), ensure you have validation layers. A bad config shouldn't just fail; it should fail safely (fail open or fail closed, depending on security needs).

Final Thoughts

The React2Shell vulnerability is serious. If you are running Next.js 15 or 16, update immediately to the patched versions (Next.js 15.5.7+ or 16.0.7+).

Cloudflare took a bullet for us by trying to patch it globally, but they tripped on their own shoelaces. It's a humbling reminder that even the giants of the web are just one nil value away from a blackout.

📚 Sources & References

- Cloudflare Official Post-Mortem: Cloudflare outage on December 5, 2025

- Vulnerability Details: CVE-2025-55182

- News Coverage:

- Next.js Security Advisory: Vercel Security Bulletin

Keep Reading

- Goodbye middleware.ts, Hello proxy.ts: The Next.js 16 Migration Guide — The architectural overhaul Next.js 16 introduced in response to vulnerabilities like this one.

- The System Design Question That Failed 80% of Candidates — Infrastructure failures like this Cloudflare outage are exactly the kind of scenario system design interviews probe.