Anthropic shipped Claude Opus 4.7 yesterday, and if you are on Opus 4.6 in production, your next deploy is going to break. I spent yesterday evening migrating my own Claude Code setup, my API agent loops, and a small app I maintain that calls Claude directly. The new model is faster at real coding work and it sees screenshots at a resolution I did not think we would hit this year. It is also the first Claude release where the upgrade needed actual code changes on my side.

This is the full deep dive and migration guide I wish someone had handed me yesterday. Benchmarks, every new feature, every breaking change, a real cost estimate for the new tokenizer, and a migration checklist you can run through in one sitting. By the end you will know whether to upgrade today, next week, or wait.

What is Claude Opus 4.7 and why does it matter?

Claude Opus 4.7 is Anthropic's most capable generally available model, released on April 16, 2026 across Claude products, the API, Amazon Bedrock, Google Cloud Vertex AI, and Microsoft Foundry. The API model ID is claude-opus-4-7. Pricing sits at $5 per million input tokens and $25 per million output tokens, the same sticker price as Opus 4.6.

The context window is 1M tokens at standard pricing with no long-context premium, and the max output is 128k tokens. If you ever priced out a 1M-token request on another vendor, you already know how unusual that is.

There is a second thing worth stating out loud. Opus 4.7 sits between the Opus 4.6 release from February and the still-gated Claude Mythos that shipped on April 7 through Project Glasswing. Mythos is the one Anthropic refuses to put on the public API because it can autonomously find real zero-days. Opus 4.7 is the model most of us actually get to run, and it is the one reclaiming SOTA on coding benchmarks from GPT-5.4 this week.

In my head, the quick mental model is: Opus 4.6 is the last of the old regime, Mythos is the one you cannot have, and Opus 4.7 is the production daily driver for the rest of the quarter.

How much better is Opus 4.7 at coding benchmarks?

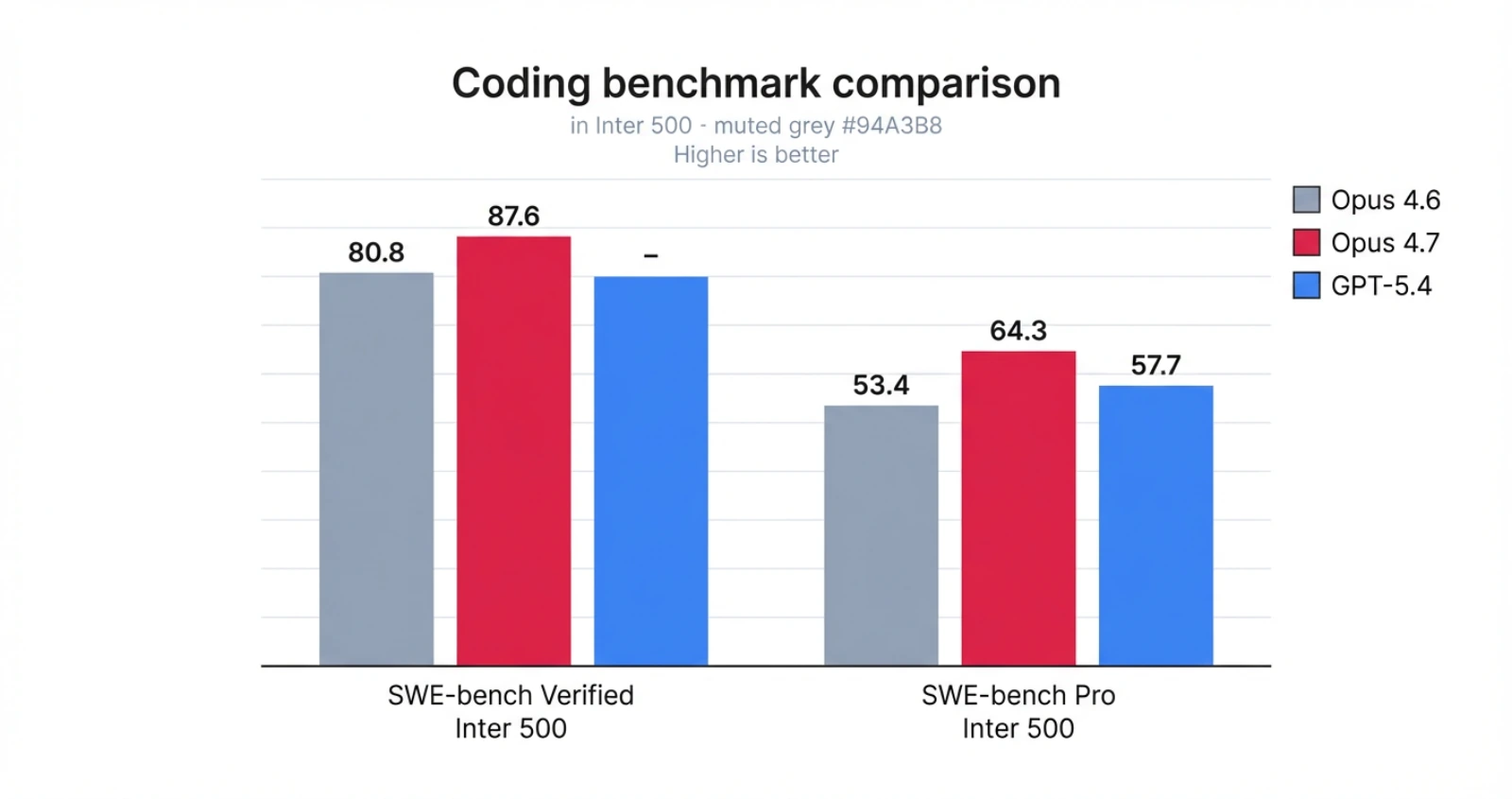

Opus 4.7 is meaningfully better than Opus 4.6 on every coding benchmark Anthropic publishes, and it beats GPT-5.4 on SWE-bench Pro by about 7 points. The numbers are not marketing vibes. They are the same benchmarks every vendor publishes, and this is the first time in months a public Claude release has taken the top slot on agentic coding.

Here is the short version of the scorecard.

| Benchmark | Opus 4.6 | Opus 4.7 | Notes |

|---|---|---|---|

| SWE-bench Verified | 80.8% | 87.6% | Nearly 7-point jump |

| SWE-bench Pro | 53.4% | 64.3% | Beats GPT-5.4 at 57.7% |

| Terminal-Bench 2.0 | - | 69.4% | New high for Claude |

| Rakuten-SWE-Bench | baseline | 3x production tasks resolved | Real enterprise tasks |

| Internal coding eval | baseline | +13% resolution | Anthropic internal |

Two things stand out beyond the raw numbers. First, Anthropic says Opus 4.7 shows a 14% improvement on complex multi-step workflows while using fewer tokens and producing roughly one third the tool errors of 4.6. Fewer tool errors is the quieter story here. If you have ever watched an agent loop rack up retries because a tool call went sideways, one third the errors compounds into real latency and real cost savings.

Second, Opus 4.7 is the first Claude model to pass what Anthropic calls implicit-need tests. Those are tasks where the model has to infer which tool or action is required, instead of being told directly in the prompt. In my own Claude Code sessions yesterday, this was the single biggest felt difference. I used to prefix prompts with "check git log before editing" out of habit. With 4.7, I stopped doing that and it checked anyway.

You can verify the numbers for yourself at the official Claude Opus 4.7 announcement and the VentureBeat launch writeup.

What new features does Opus 4.7 add?

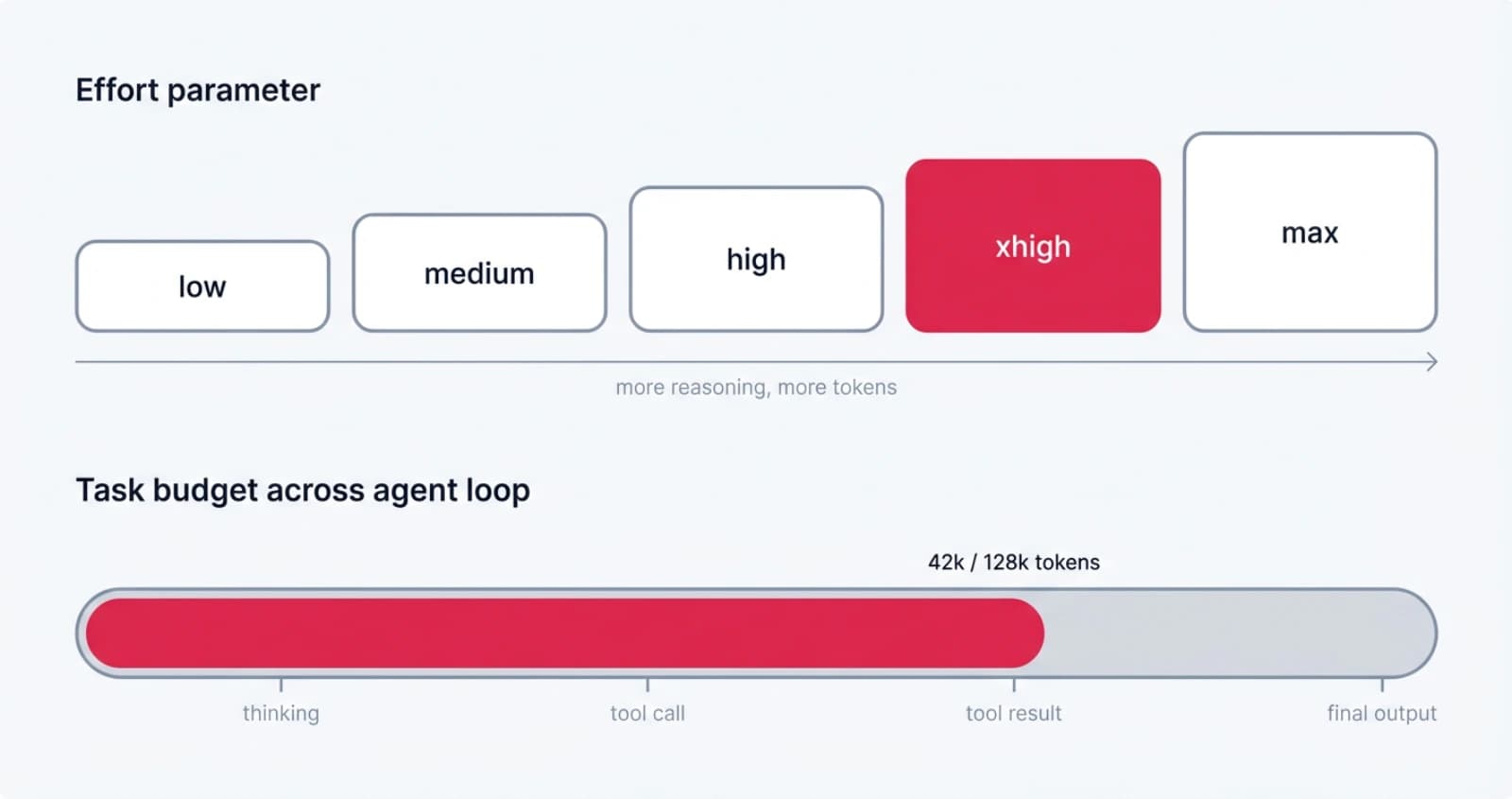

Opus 4.7 adds three developer-facing features that actually change how you write agent code. Those are the xhigh effort level, task budgets, and high-resolution vision at 3.75 megapixels.

The new xhigh effort level

The effort parameter already lets you trade intelligence for speed and cost, but 4.7 introduces a new level called xhigh that slots between high and max. Anthropic recommends starting at xhigh for coding and agentic tasks, and at least high for anything else that is intelligence-sensitive.

Claude Code defaults to xhigh across every plan now. That is a big deal because it means the same prompt that cost you X tokens yesterday will silently cost more today unless you explicitly lower the effort.

# Claude Opus 4.7 with explicit effort

response = client.messages.create(

model="claude-opus-4-7",

max_tokens=64000,

output_config={"effort": "xhigh"},

messages=[{"role": "user", "content": "Refactor this service layer."}],

)Task budgets, the advisory cap for agentic loops

Task budgets are the new feature I am most excited about. A task budget is a rough token target for the full agentic loop, including thinking, tool calls, tool results, and final output. The model sees a running countdown and uses it to prioritize work and finish the task before it runs out.

The important distinction is that max_tokens is a hard per-request cap that the model never sees. A task budget is advisory, the model is aware of it, and it self-moderates. You set both at once for agent work that has a real budget ceiling but needs the model to plan.

response = client.beta.messages.create(

model="claude-opus-4-7",

max_tokens=128000,

output_config={

"effort": "high",

"task_budget": {"type": "tokens", "total": 128000},

},

messages=[

{"role": "user", "content": "Review the codebase and propose a refactor plan."}

],

betas=["task-budgets-2026-03-13"],

)A few rules to remember. The minimum budget is 20k tokens. Budgets are not hard caps, so the model can go over if it really needs to. Do not set a budget for open-ended tasks where quality matters more than speed, because a tight budget makes the model cut corners. The full spec is on the task budgets documentation page.

High-resolution vision at 3.75 megapixels

Opus 4.7 is the first Claude model that accepts images up to 2576 pixels on the long edge, or 3.75 megapixels. That is more than three times the resolution ceiling of 1568 pixels on earlier Claude models. Coordinates are now 1:1 with actual pixels, so there is no scale-factor math when the model returns bounding boxes.

This matters most for computer use, screenshot agents, and document understanding. I had an internal screenshot workflow that was choking on a dense admin dashboard because small buttons blurred out at 1.15 megapixels. Re-running it on 4.7 at full resolution, the model found the buttons on the first try. One caveat: high-resolution images use more tokens, so downsample if you do not need the fidelity. Vision details and examples are on the Images and vision docs page.

What breaking changes should you know before upgrading from Opus 4.6?

The Messages API has four breaking changes on Claude Opus 4.7 that will make existing code throw 400s or silently behave differently. Claude Managed Agents is unaffected, so if you only use Managed Agents you can skip this section.

Extended thinking budgets are removed

The thinking.budget_tokens field is gone. Passing thinking: {"type": "enabled", "budget_tokens": N} now returns a 400 error. Adaptive thinking is the only thinking-on mode, and Anthropic says it reliably outperforms budgeted extended thinking on internal evals.

# Before (Opus 4.6)

thinking = {"type": "enabled", "budget_tokens": 32000}

# After (Opus 4.7)

thinking = {"type": "adaptive"}

output_config = {"effort": "high"}Adaptive thinking is off by default

This is the silent one. Requests with no thinking field now run without thinking at all. On Opus 4.6 you had thinking by default in most setups. You now have to set thinking: {"type": "adaptive"} explicitly if you want any reasoning on the request.

If you forget this, your output quality on hard tasks will drop and you will not see a 400. Just worse answers. Watch out.

Sampling parameters are removed

Setting temperature, top_p, or top_k to any non-default value returns a 400 on Opus 4.7. This one tripped me up on a tool that was hardcoded to temperature=0 for "determinism."

# This will now 400 on claude-opus-4-7

client.messages.create(

model="claude-opus-4-7",

temperature=0.7, # BadRequestError: sampling parameters not supported

...

)The safest migration is to drop these fields from every request and guide behavior via prompting instead. If you were using temperature=0 for determinism, note that it never actually guaranteed identical outputs anyway. The expectation was always probabilistic.

Thinking content is omitted by default

Thinking blocks still show up in the response stream, but their thinking field is empty unless you opt back in. The reasoning still happens. You just do not see it.

If your UI streams reasoning to users as it arrives, the new default looks like a long blank pause before the final output starts. Users will notice. The fix is one line:

thinking = {

"type": "adaptive",

"display": "summarized", # "omitted" is the new default

}The full list of breaking changes is on the What's new in Claude Opus 4.7 docs page.

How does the new tokenizer change your real costs?

Opus 4.7 ships a new tokenizer that can use 1x to 1.35x the tokens of Opus 4.6 for the same input text. Pricing is identical per token, but that 35% ceiling means real invoices go up for most workloads even when nothing else changes.

I ran a quick test yesterday on an agent loop that summarizes GitHub PRs. Same prompt, same PR, same effort level. Here is what I measured on my setup.

| Metric | Opus 4.6 | Opus 4.7 | Delta |

|---|---|---|---|

| Input tokens | 12,430 | 14,820 | +19% |

| Output tokens | 2,110 | 2,480 | +17% |

| Total cost | $0.115 | $0.136 | +18% |

This is one data point on one workload, so take it as directional rather than authoritative. On plain English the tokenizer seems close to parity. On code-heavy prompts the gap widens. Anthropic's own docs confirm the 1.0x to 1.35x range varies by content type.

There are three mitigations that actually work.

- Set a task budget. The model sees the countdown and prioritizes. On my PR summarizer, a 10k task budget pulled output costs back to near parity with 4.6.

- Drop from xhigh back to high. The jump from high to xhigh is large. If your workload is not reasoning-bound, high is usually fine and saves you the biggest chunk.

- Rebudget your

max_tokens. If you setmax_tokensbased on 4.6 token counts, add headroom. This also matters for compaction triggers in agent loops that summarize when approaching the ceiling.

If you want the full cost write-up with more workload types, Finout published a good breakdown on the real cost story behind the unchanged price tag.

How do you migrate an existing Opus 4.6 app to 4.7?

The migration from Opus 4.6 to 4.7 is a one-sitting job for most apps. Here is the checklist I used on my own code yesterday. It works whether you are on the raw SDK, the AI SDK through Vercel AI Gateway, or LangChain.

Step 1: Update the model ID

Change every claude-opus-4-6 string to claude-opus-4-7. Search and replace. Check config files, prompts stored in databases, and feature flags that select the model at runtime.

Step 2: Remove sampling parameters

Grep for temperature, top_p, and top_k across your codebase.

grep -rE "(temperature|top_p|top_k)\s*[:=]" src/Delete every occurrence for Claude calls. If your code shares the same request builder across providers, gate the Claude branch separately or remove the fields for all models.

Step 3: Swap extended thinking for adaptive thinking

Search for budget_tokens.

grep -rn "budget_tokens" src/Replace with adaptive thinking plus an explicit effort level. If you used extended thinking budgets to control cost, task budgets are the replacement.

Step 4: Decide on thinking visibility

If your UI streams reasoning to users, add "display": "summarized" so they see progress. If you only log thinking server-side for analysis, leave the default.

Step 5: Rebudget max_tokens for the new tokenizer

Bump max_tokens on requests where you were running close to the ceiling. A 20% buffer covers most cases. Also check any prompt-compaction thresholds.

Step 6: Re-test prompts that relied on 4.6's warmer tone

Opus 4.7 is more direct and more literal. If you had prompts that said "be friendly and use emoji," those still work. If you had prompts that relied on the model silently generalizing an instruction from one item to another, 4.7 will not do that. Strip the scaffolding that told 4.6 to "double-check the slide layout" or "verify the output before returning," because 4.7 does that on its own now.

Step 7: Check for cyber safeguards

Opus 4.7 ships with real-time cybersecurity safeguards that refuse certain high-risk requests even for legitimate security research. If your product does security work, apply to Anthropic's Cyber Verification Program to preserve access.

The full step-by-step including automated codemods is on the Claude API migration guide.

Should you upgrade to Opus 4.7 now?

For most builders, upgrade this week. The coding benchmark gains are real, the agentic improvements show up in fewer tool retries and smarter tool selection, and the cost ceiling is manageable once you know about the tokenizer bump.

Here is my honest call by workload type.

- Agentic coding, Claude Code users, tool-heavy agents: upgrade today. The implicit-need inference alone changes the prompt-engineering tax on every session.

- Computer use, screenshot agents, document analysis: upgrade today. The 3.75 megapixel jump is the biggest vision upgrade in a Claude release since vision shipped.

- Plain chat apps with no tools: no rush. You get a small quality bump and a small cost bump. Pick a low-traffic Tuesday.

- Apps that hardcode

temperature=0for "determinism": do the migration work first, then ship. You will 400 on every request until the sampling fields come out. - Products that stream thinking to end users: migrate with the one-line

displayfix in the same deploy. Otherwise users see a dead pause before output and file bug reports. - Security research tooling: apply to the Cyber Verification Program before the upgrade, not after. The new safeguards are stricter than 4.6's.

Also apply common sense. Run the upgrade on a preview environment before prod. Watch your cost dashboard for a week. If your task success rates hold and your invoice is within 20% of what you budgeted, you are in good shape.

Conclusion

Claude Opus 4.7 is the upgrade I have been waiting for, but it is also the first Claude release that required me to actually read the docs. The coding jump is real, the agent loop is smoother, and the vision resolution finally matches what Retina screenshots actually capture. At the same time, four breaking Messages API changes and a new tokenizer mean you cannot just bump the model ID and redeploy.

If you take one thing from this post, make it the migration checklist. Work through steps 1 to 7 on a branch today, run your tests, watch the cost dashboard for a day, and ship. That is the whole job. My prediction for the quarter is that we are going to see the agent tooling ecosystem reorganize around task budgets as the new primitive, because a hard advisory cap that the model self-moderates against is a much cleaner abstraction than stitching manual token counting into every custom loop. Watch for that pattern to show up in every major framework within a few weeks.

For more on Claude Opus 4.7, see the official Anthropic announcement, the What's new in Claude Opus 4.7 docs, and the detailed benchmark reporting from The Next Web.

Keep Reading

- Claude Mythos and Project Glasswing: the gated model that sits above Opus 4.7, why you cannot have it, and what that signals about where Anthropic is headed.

- Claude Skills vs MCP vs Projects: how the three Claude extensibility systems fit together, and where Opus 4.7's better memory tool lands in that picture.

- Vercel AI Gateway Deep Dive: route Opus 4.7 through the AI Gateway for cost tracking, fallbacks, and per-model observability without rewriting your client code.

- Google Antigravity: the competing agentic coding platform, for context on where Cursor, Windsurf, and Anthropic's own Claude Code fit in the same week.